Apple Music is a big change. No matter what you used for music previously, Apple Music is different. It’s an exciting service, and it’s doing a great job of getting me back into music. But it’s also confusing.

I’ve spent a lot of time with Apple Music lately, and I wanted to share some things I learned that have helped me get comfortable with it.

Think of the tabs as separate apps. Â This is an important point, because sometimes what you can do depends on where you are.

If you tap on a tab, it will take you to where you last were on that tab (if you’d tapped on a playlist, or artist, for example) but tapping the tab again will take you back to that tab’s home. Remember that one, it’s useful.

Curated Playlists

The curated playlists are a big part of Apple Music, but conceptually you can think of them as albums. Unlike algorithmic music services, these playlists are fixed length, typically about 80 minutes, and are assembled by humans, so they tend to be a pleasant collection of songs that work well together.

This means you can do things with playlist suggestions like add them to you music library, and make the songs in them available for offline listening, something you can’t do with a radio station.

For You

This is Apple Music’s main recommendation engine, and using your preferences and some other data (it’t not exactly clear what, but I believe your previous play history has something to do with it), Apple Music will add albums, and curated playlists, to the For You recommendation list.

This list keeps growing as you use the app, so you don’t have to worry about losing recommendations as new ones become available.

Right now, for me, “Modern Breakup Songs†and “Rush 80’s†my top For You recommendations.  If you don’t like a recommendation, tap and hold on it for a second, and the menu that appears has an “I Don’t Like This Suggestion.†menu item.  Tap that, and hopefully you’ll see fewer suggestions like that in the future (although in practice, it hasn’t make a lot of difference in my case, yet).

Pull to refresh. Â If there are new suggestions, they will appear at the top.Â

The plus button adds stuff to your iTunes Music Library, essentially adding it to the My Music tab.  This doesn’t make it “your†music, the same way purchasing it does, which is a bit confusing. What you’re adding is more like a shortcut to the music in Apple Music, that will disappear if you ever unsubscribe.

Radio

There are two things here: Â Beats 1, which is live, and you have no control over, and algorithmically-generated radio stations.

If you tap on a station, like “Pop Hitsâ€, the app will start playing an appropriate song.  If you don’t like it, you can skip it (although skipping it does nothing to change the content that the station will play).  There’s no way to feed back that it made a bad choice.

You can create a new radio station from a song or album. I don’t know if there’s any human curation involved in these playlists, but they seem roughly equivalent to the same feature on Spotify.

Now Playing

When you’re listening to a song, there’s a bar above the tabs that shows the song that’s currently playing. Â

Tap the heart to indicate that you love a song. This feedback is used to help improve the suggestions on the For You tab, and apparently have nothing to do with radio stations.

Tap and hold the fast forward or rewind buttons, to have the app skip forward or back within the current song, instead of instantly switching songs.

One question I have about the heart, and liking music, is what happens when a song is on more than one album?  Liking it in one place doesn’t automatically like it in the others, so what does that mean for how it uses that data for recommendations?

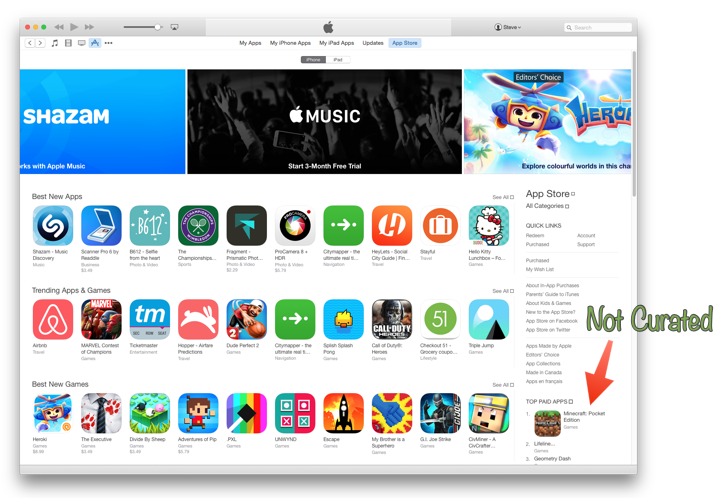

New

New is a weird tab.

Here you can find new music.  But you can also Apple Editor’s Playlists, Activity Playlists, and Curator Playlists.Â

There’s a drop-down at the top for picking a genre, which changes some of the content on the tab, but not all of it. The curated playlists don’t seem to change, but the top lists and other content does change to match the genre you picked.

Honestly I spend very little time on this tab, except when I want to find music to match a mood (something Spotify was pretty good at). Â There are a lot of great playlists in here, but you need to tap the New tab, scroll to Activity Playlists, and then pick a genre to get to them.

The “Hot Tracks†and other top playlists don’t take my taste into account at all, and so are generally useless. There’s definitely an opportunity to do better here.

Connect

Connect is a curiosity at this point.  Sometimes there’s interesting stuff in there, but I don’t know how valuable it will be.

Clean vs Explicit

Apple Music respects the setting you set in the Allowed Content section of Settings / General / Restrictions in the system Settings app. It’s a shame there’s no easy way to toggle this, because for me it really depends on “are there kids in the carâ€.

Search

Search is global - it doesn’t matter where you are in the app when you hit the search button.  The app will present search results from all over the service, including curated playlists, music videos, and results from your own music library.  It works pretty much as you’d expect.

Offline

Figuring out exactly what you can listen to offline isn’t obvious, but you can take any song, album, or playlist in the My Music tab and tap it’s menu button to show the menu, and then pick “Make Available Offlineâ€.  This will copy the files to your device. You can change a setting to indicate whether Apple Music is allowed to use cellular data to stream music, but unfortunately, like the Clean setting, this is buried off in the system Settings app, so it’s awkward to change on the fly.

If a curated playlists calls for a song that you have locally on your device, then it will play the local copy. Â Seems obvious, but I think this is a cool feature.

Overall

I have a lot of music - over the years I’ve bought hundreds of albums, and they’re all ripped and in my music library, but the challenge has been finding a way to really enjoy all this music.  Genius tried, but wasn’t very good. Spotify didn’t seem to really care much about my music collection, nor did Rdio.

Apple Music often suggest I listen to music I already have, often music that I haven’t listened to in ages. And then will stream it from my local copy.

With Apple Music, I'm finding it’s easier to just get some music playing that I like, and that’s what it’s all about.